Background

Ever realize you’ve been spending too much of your time writing the same report or creating the same data visualization day after day, week after week? I have. Recently I’ve been putting together a data set from publicly available data and using it to train a time series forecast model before putting the resulting predictions onto a Tableau dashboard. Considering almost 100% of this was being done online or in the cloud already, I knew there was a better way. That’s when Chris Miller, founder and CEO of Cloud Brigade in Santa Cruz, California introduced me to AWS Glue. Now I can spend my time making data-driven decisions rather than building the same reports every day.

Challenge

Is there a way to utilize the AWS Cloud Infrastructure to automate my daily data collation, transformation and visualization?

Benefits

- Dashboard is ready to view

- Reduces decision-making time

- Frees up human resources

- Improves creativity

Business Challenges

Irresolvable Complexity: With AWS Glue, there’s no need to use multiple services, OAuth keys, daily manual extract, transform and load (ETL) jobs, or daily data uploads to a visualization tool.

Inefficient Systems/Processes: AWS Glue connects to your data lake in Amazon S3, your data collection source in Amazon Elastic Compute Cloud (EC2), and your Amazon QuickSight data visualization tools together seamlessly, using AWS Lambda and Amazon EventBridge to automate every step. Code it once and let it run.

Skills & Staffing Gaps: Free your Data Scientist, Data Engineer, Database Administrator, or DevOps and Software Engineers to do new and more exciting tasks every day by automating the workflows that already work.

Antiquated Technology: If you’re not leveraging cloud infrastructure to make your business more efficient and agile, what are you even doing?

Business Bottlenecks: Don’t let your decision-making be delayed by the creation of your reports.

Excessive Operational Costs: Like it or not, labor hours cost money, and you don’t want your software engineers or data scientists spending all of their time running the same old tasks that AWS Glue can automate for you.

Solution and Strategy:

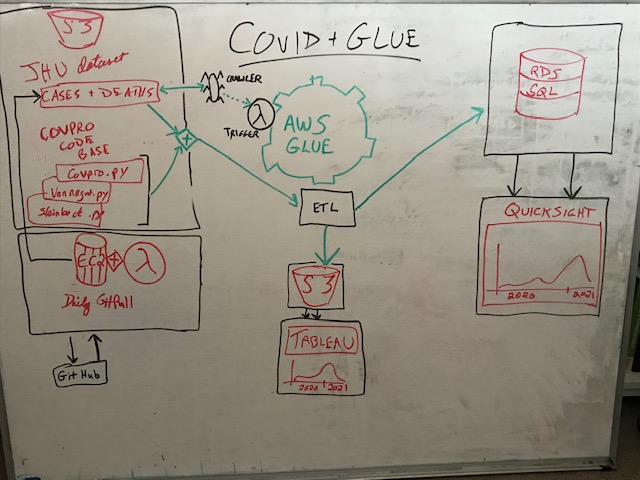

The first thing I had to do was draw up the workflow on my whiteboard. Hey, even serverless tech relies on human thinking, and this human thinks best with the sweet smell of dry-erase marker.

Working Backwards:

- Final Goal: An automated Amazon QuickSight or Tableau dashboard displaying the number of COVID-19 cases and reported deaths per day in Santa Cruz, California

- Use AWS Glue to send an optimized csv file to my data lake in Amazon Simple Storage Service (S3)

- Use AWS Glue to perform the ETL, transforming the raw data into the optimized format that we need for modeling and/or visualization

- Use Amazon EC2 in order to run a Git pull every day and send the data to the Amazon S3 bucket in our data lake

- Use AWS Lambda, triggered by an Amazon EventBridge event to fire up the Amazon EC2 instance every day for its daily Git pull

Technical Hurdles to Overcome:

Whether you’re using the AWS cloud infrastructure, a new smart watch, or even driving a John Deer Tractor, things don’t just work without some effort, trial and error.

For example, in the process of connecting these systems together, it is important that you are well versed in how to create new AWS Identity and Access Management (IAM) Policies and Roles. These are the security structures that control the permissions in your cloud infrastructure. “The Cloud” is just a nickname for the internet, after all, and so storing data up on the internet can leave it exposed to the nefarious actors that attack when your database is left unguarded. AWS IAM helps to keep your data and your systems safe, and it’s up to you to know how to properly wear this armor about your business.

Also, I learned the hard way that you have to keep your data lake and your data engineering environments in the same AWS Regions. If your Amazon S3 bucket is in Northern Virginia, for example, then your AWS Glue resources must also be built in the Northern Virginia region. The same is often true when running Machine Learning models in Amazon SageMaker, though the boto3 Python library has some workarounds there if you end up in the wrong part of the world…virtually speaking of course.

This Was No Ordinary Project

In most businesses, you’ll have data automatically uploaded to a database, often a MySQL database fed by a PHP front-end, and that data can be queried after a daily upload into a data warehouse. Often analysts and mid-level managers are spending 20-40 hours a week running queries and building dashboards to study changes in Key Performance Indicators (KPIs), only to have to run these same queries the following week and start all over again to measure the changes.

Using AWS Glue, we are now able to work with these businesses to free up those analysts to make data-driven decisions on what to do, rather than figuring out how to tell a story with the data in the first place.

Results

With some elbow grease, I was able to squeeze days worth of work into a seemingly instant end-to-end application.

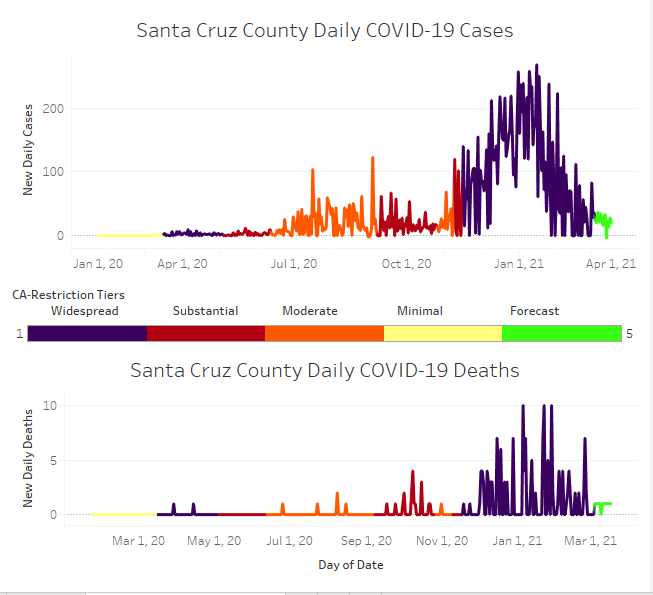

Here is an example of the finished product in Tableau. I also added in the results of a DeepAR time series forecast that utilizes a Recurrent Neural Network (RNN) from MXNet’s Gluon library on AWS. This dashboard adds the California COVID-19 Restrictions Tier Color Codes as a filter to the dashboard visual, so you can see how the change in the local restrictions levels affected the spread and mortality of the disease in Santa Cruz, California.

“I love creating new ostensible demonstrations of data, and automating the whole workflow allows me to learn from the data visualizations and make data-driven decisions rather than spending all of my time putting reports together.“

-Matt Paterson, Data Scientist, Cloud Brigade

Want to try it yourself? Check out the detailed tutorial that I created for this project!

Think your business data reporting could use some streamlining? Contact us today to learn if a Cloud Brigade custom solution is right for you.

What’s Next

If you like what you read here, the Cloud Brigade team offers expert Machine Learning as well as Big Data services to help your organization with its insights. We look forward to hearing from you.

Please reach out to us using our Contact Form with any questions.

If you would like to follow our work, please signup for our newsletter.